Seeing Behind the Camera:

Identifying the Authorship of a Photograph

Abstract

We introduce the novel problem of identifying the photographer behind

the photograph. To explore the feasibility of current computer vision

techniques to address this problem, we created a new dataset of over

180,000 images taken by 41 well-known photographers. Using this dataset,

we examined the effectiveness of a variety of features (low and high-level,

including CNN features) at identifying the photographer. We also trained

a new deep convolutional neural network for this task. Our results show

that high-level features greatly outperform low-level features at this

task. We provide qualitative results using these learned models that

give insight into our method's ability to distinguish between photographers,

allow us to draw interesting conclusions about what specific photographers

shoot, and demonstrate two applications of our method.

Experimental Results

For every feature in the table below (except TOP which assigns the max

output of PhotographerNET as the photographer label) we train a one-vs-all

multiclass SVM using a linear kernel. We report the F-measure for each

of the features tested. We observe that the deep features significantly

outperform all low-level standard vision features. We also observe that

Pool5 is the best feature within both CaffeNet and Hybrid-CNN. Since

Pool5 roughly corresponds to parts of objects, we can conclude that

seeing the parts of objects, not the full objects, is most discriminative

for identifying photographers. This is intuitive because an artistic

photograph contains many objects, so some of them may not be fully visible.

For more details and other observations, see our paper.

| Low |

High |

|

|

Caffenet |

Hybrid-CNN |

PhotographerNET |

| Color |

GIST |

SURF-BOW |

Object Bank |

Pool5 |

FC6 |

FC7 |

FC8 |

Pool5 |

FC6 |

FC7 |

FC8 |

Pool5 |

FC6 |

FC7 |

FC8 |

TOP |

| 0.31 |

0.33 |

0.37 |

0.59 |

0.73 |

0.7 |

0.69 |

0.6 |

0.74 |

0.73 |

0.71 |

0.61 |

0.25 |

0.25 |

0.63 |

0.47 |

0.14 |

T-SNE Visualizations

Hybrid-CNN Pool5

PhotographerNET FC7

Our T-SNE embeddings project the high dimensional feature vectors from

each image into a low dimensional space. We then plot the embedded features

by representing them with their corresponding photographs. By embedding

features in this way, we are able to see how the features split the

image space and gain insight into what the features are using to perform

their classifications. In the examples shown here, we observe that H-Pool5

divides the image space in semantically meaningful ways. For example,

we see that photos containing people are grouped mainly at the top right,

while buildings and outdoor scenes are at the bottom. In contrast, PhotographerNET’s

P-FC7 divides the image space along the diagonal into black and white

vs. color regions. It is hard to identify semantic groups based on the

image’s content. More discussion of these visualizations can be found

in our paper. Larger results of these and other features are available

in our supplementary material.

New Photograph Generation

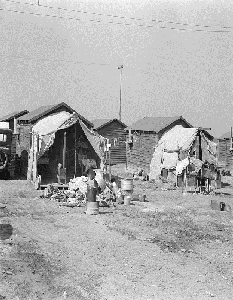

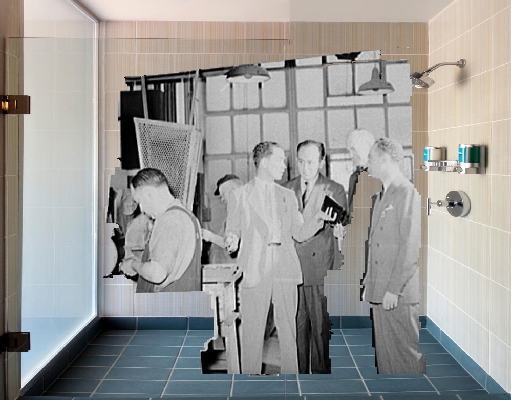

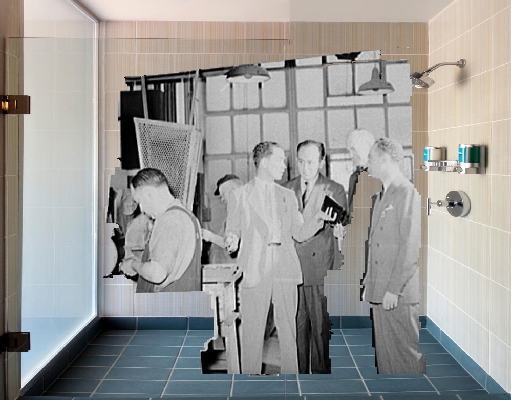

Delano Pastiche

Erwitt Pastiche

Highsmith

Pastiche

Delano Authentic Photographs

Erwitt

Authentic Photograph

Highsmith

Authentic Photograph

Hine Pastiche

Horydczak Pastiche

Rothstein

Pastiche

Hine Authentic Photograph

Horydczak Authentic Photographs

Rothstein

Authentic Photograph

We wanted to see whether we could take our photographer models a step

further by actually generating new photographs imitating photographers’

styles. We first learned what types of scenes each photographer tended

to shoot. Then, in each scene type, we learned what objects the photographer

tended to shoot and where in the photograph they tended to be. Using

our learned probability models for each photographer, we probabilistically

sampled a scene type, the objects to appear in that scene, and the spatial

location of each object. We then used an object detector to find and

localize instances of those objects in the photographer's data, segmented

them out, and pasted them in the probabilistically chosen location.

We show several examples of generated photographs above from our paper

above. For each generated photograph, we also show at least one authentic

photograph. We note that many of the generated photographs bear similarity

with the real photographer's work. We provide many more generation results

in our supplementary material.